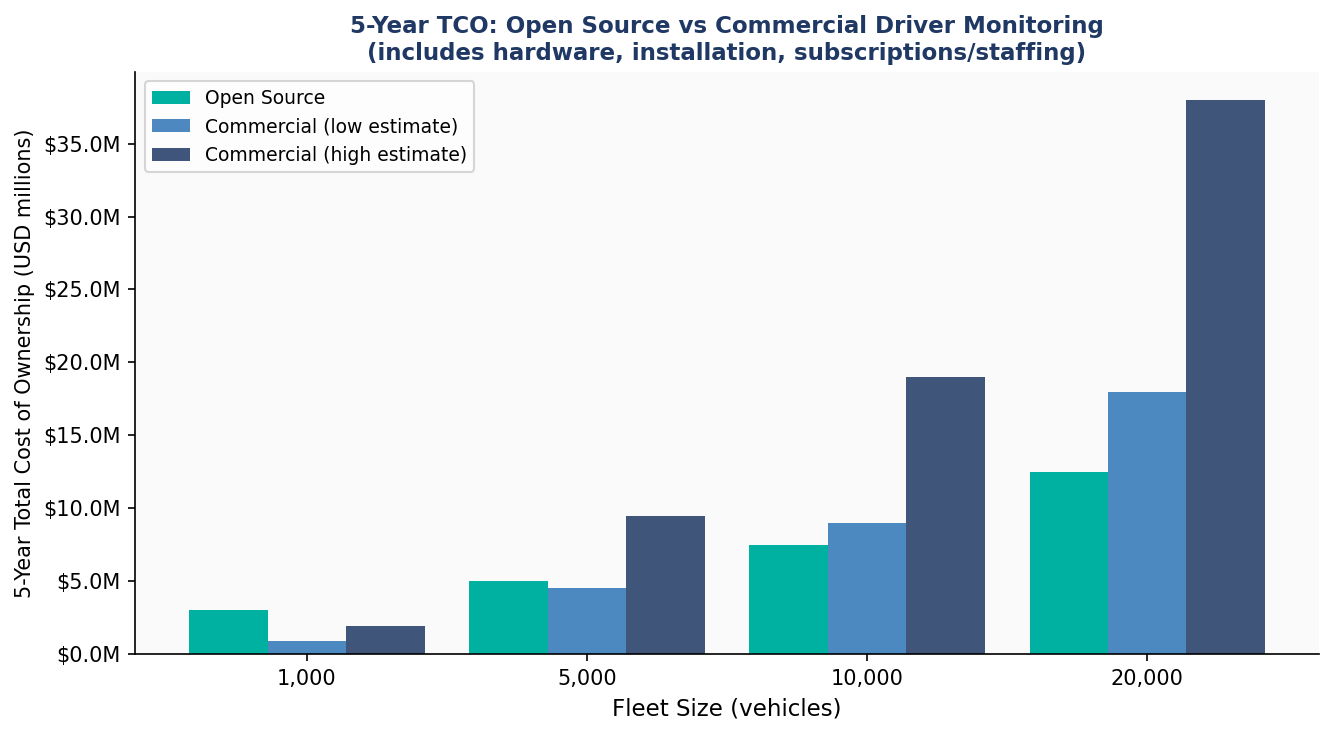

20,214 Facial Images Across 4 Classes

All images are 48×48 pixels in grayscale format. The test set is perfectly balanced at 32 images per class, ensuring accuracy figures are not inflated by any majority class.

| Split | Total | Happy | Neutral | Sad | Surprise |

|---|---|---|---|---|---|

| Training | 15,109 | 3,976 | 3,978 | 3,982 | 3,173 |

| Validation | 4,977 | 1,825 | 1,216 | 1,139 | 797 |

| Test | 128 | 32 | 32 | 32 | 32 |

| Total | 20,214 | 5,833 | 5,226 | 5,153 | 4,002 |

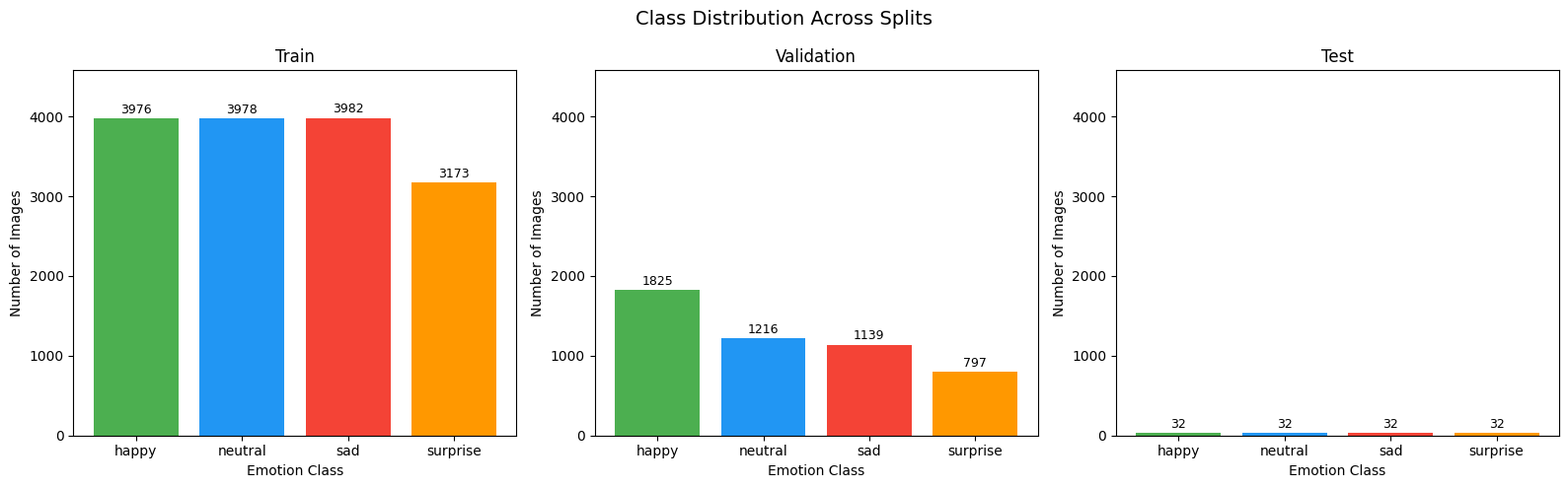

Pixel Intensity Analysis

Per-class pixel statistics reveal the core classification challenge. Mean intensity values differ only modestly, and standard deviations are nearly uniform — confirming that spatial structure, not brightness, is the discriminative signal.

| Class | Mean Intensity | Std Dev | Visual Characteristics |

|---|---|---|---|

| 😊 Happy | 130.62 | 63.59 | Most visually distinct — broad smiles, Duchenne markers |

| 😐 Neutral | 123.99 | 64.98 | Defined by absence of expression — most ambiguous class |

| 😔 Sad | 120.72 | 64.63 | Only 3.27 points below Neutral mean |

| 😮 Surprise | 147.25 | 63.90 | Brightest class — wide eyes, open mouth |

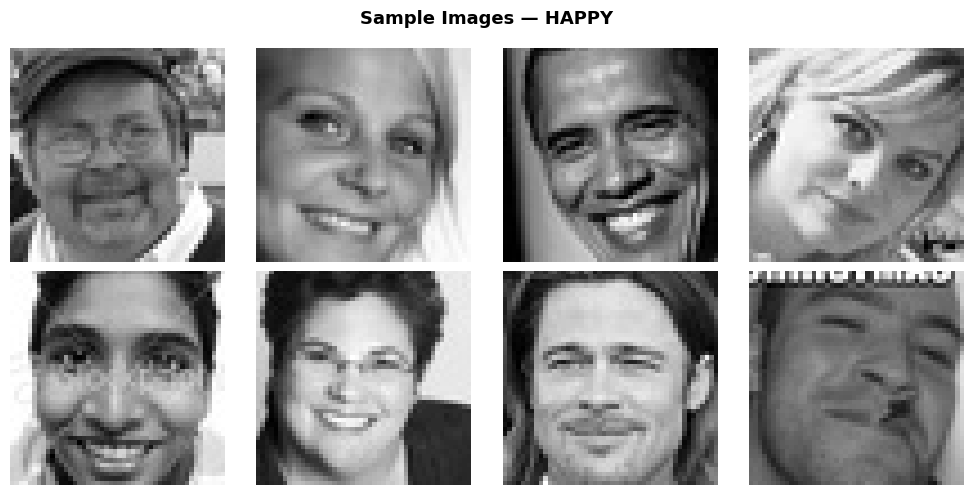

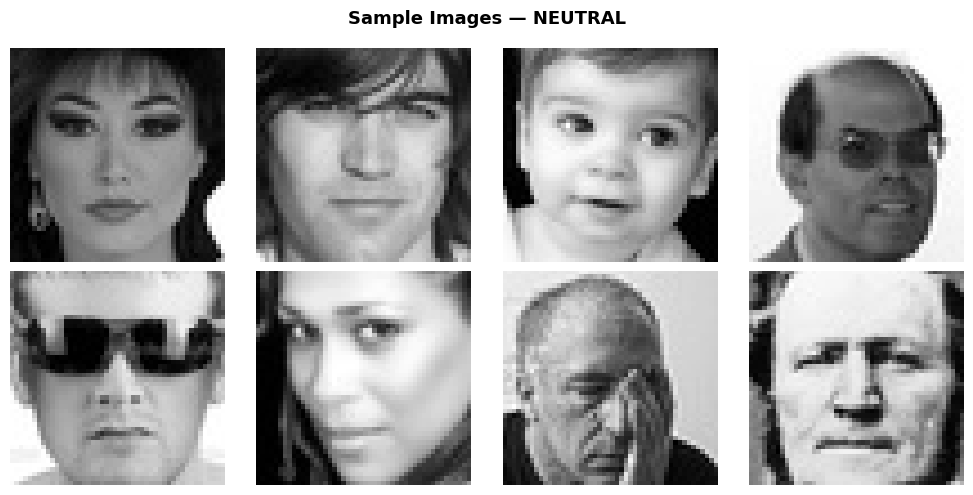

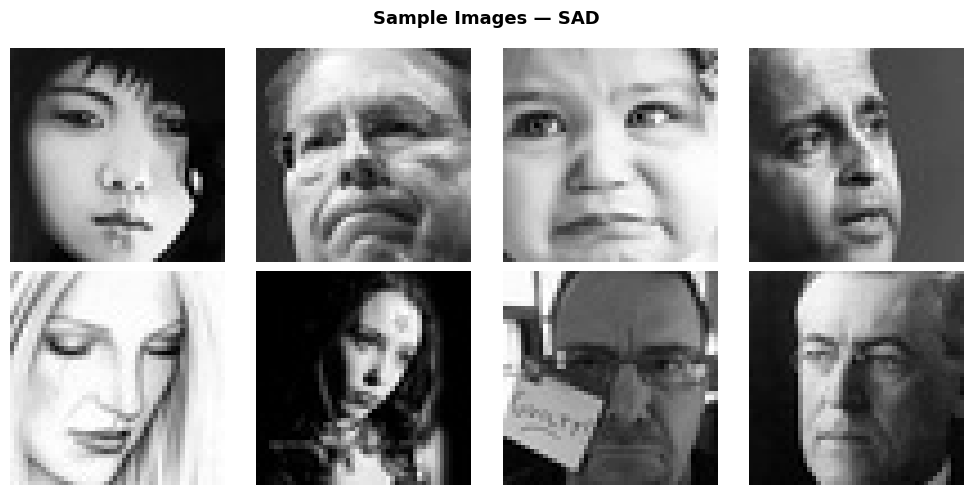

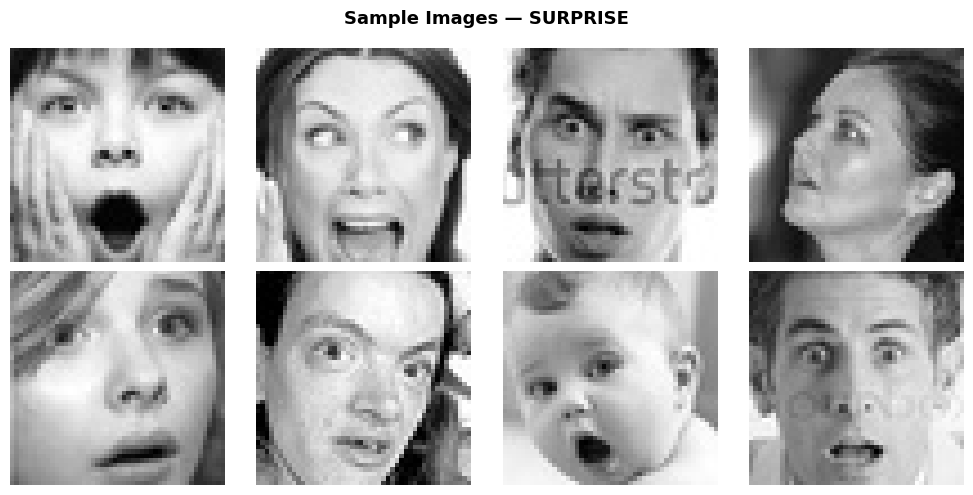

Sample Images by Class